“Architecture” hereafter refers to the cultural discourse of creating worlds, interiorities mediated through physicality, and virtuality. What is interiority then? Not bound to physical interiors per se or enclosed places, it is an emergent characteristic that supports a sense of being consciously present within a space.

In that complex ecosystem, the virtual comes in and picks up real fast. The distinction becomes increasingly difficult, not only between the physical and the virtual but also between the difference in terms of the territories where this variance matters. The effects of projecting through each of the media, and the impossibility of achieving certain affects using solely one or the other, make it progressively essential to work with both.

Architectural volume, contrary in significance to space, is “unlimitless” – in other words, finite, with a secret desire of being geometrical. It is an independent entity that is bound, produced by sets of tricks played on the space-time continuum. Hence, interiority as volumetricizing space. That is to say, volume is still space, but a designed one.

This is history, and not far away, popping up from within the dominant physical-virtual duality, a universe built on an abundant set of distinct worlds. A model in a computer, an animated filter on an iPhone screen, an immersive virtual reality experience, etc., these are all stand-alone happenings. Not so much stand-alone though. But that is what our medium of consumption suggests. Through the screen of a mobile phone, what we see and interact with makes the whole story of that footprint vanish. It becomes uncertain whether that footprint is even real, seeable, or even existent.

By consciously shaping a piece of space, design unconsciously – usually – shapes all the rest: i.e., the outside, as opposed to an inside, a negative space, an ‘other’ space, the areas unconsidered. Consequently, by designing our context, we have unconsciously designed our brains, both through the designing and the inhibition of these designed voids.

With the objective of designing for a brain, design and the brain both fall under the light. The brain, as an external reference to design, is a territory of a new nature, an electrical living lump that designers will eventually need to deal with.

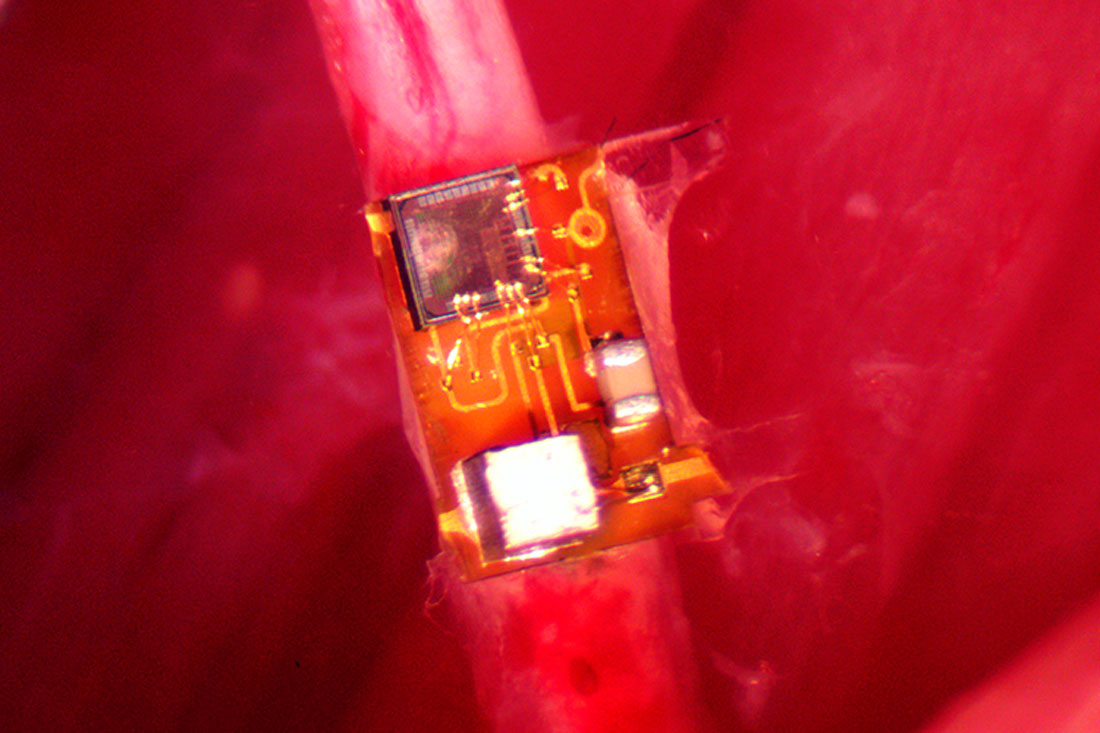

The scientists recorded the subject’s brain waves using up to 70 implanted electrodes. The image is credited to Gregor Gast, UKB.

At the heart of the battle, design, experience, and aesthetics, ancient central figures of the practice, are to be stretched in an attempt to change the medium in which they are conducted throughout. From pen to pixel, to bits and atoms, to a new hybrid that unifies both. As the virtual and the real marry, the artificial and the living meld.

The task then is not to reduce experience to neural activity. Not because the hypothesis is unviable, but because of our limited capacity to arrive at an answer, so far. But whether that turns out to be workable or not, architecture needs to define a new typology of the brain – one which it has shaped and has been shaped by.

Accordingly, the main objective is to understand the brain, in relation to its experiential context. Hence, rather than neuroticizing aesthetics, architecture needs to aestheticize the brain. To propose a new way not only of looking at things but redefining them. Technology has shaped design, as much as design has shaped technology, and with that projective attitude, an architectural brain pulsates awaiting interventions.

What is the brain in architectural terms? I put forward the following equation, the brain as a function of interiority: f(interiority), a new function that suggests a causal and qualitatively dependent relation between interiority and the human as we know it.

In this statement there is an echo of the direct bond between presence as space, and that the former is unthinkable without space. Eisenman has repeatedly tried to put forward space as the locus of the metaphysics of presence. That metaphysics on the other side comes to stand face to face with a desire for immediate access to meaning and “builds a metaphysics or ontotheology based on privileging presence over absence”.

We tend to consume or dominantly think about our environments in a strongly optical manner. From that optical, a visual emerges, a meta-optical: a visual that is to the optical, what perception is to cognition. Selective, relative, bidirectional and ever-changing.

But what is next? From drawings to algorithms, from physical computing to biocomputing, the interface is key. Paper, screens, lights, sounds, or scents at best, all external still, have mediated between us and what these things represent. As that might sound quite undermining to the wholeness of spaces, it is important to note that our senses mediate between us and what is out there, but as we become interfaces, we share our “interfaceness” with the actual environments. The human skin, that thin layer of interface, tends to be shared with space. Do we share it? Is the skin the world’s means into us? Would it be the space’s equivalent of a touch screen? Is skin our limit or space’s limit? It is the middle ground; it is what vanishes as an interface. That agency does not quarantine its object; on the contrary, it presents it as a new design ground.

On the other end of the scale, new users channel the production of space into a multi-user object, plural in terms of nature and type. In parallel, environments have changed, in nature and type too. As we live in this valley between the real and the virtual, and all the possible hybrids that emerge, we realize the variety of dimensions such spaces could have.

Designing for the brain, or in other terms, redesigning the brain, given the state of the art, requires at an optimal state, interacting with the brain directly, without passing along the bridge of the senses. Technology becomes the interface, rather than the senses, etc. That claim then puts to question the way design is brought into the world. How is design produced, when housed by brain chips? Our nervous system is electric in the way it receives and transmits inputs and outputs respectively and hence requires a compatible other to interact with.

As architecture is not just physical building, decorated or transmitted through media, it is itself media too. Spreading out that other wing is a means to approach a way of creating worlds, rejecting the optical as its epicenter. Perhaps meaning too. What do the design process, thinking, and production prioritize then? The answer is nothing. It sits in the bounds of the ability to be nested in a chip but is designed to facilitate the contrary: to allow a new way of equal-grounds communication.

Ingesting or injecting a world into a brain sounds and is futuristic. But to break it down, it is not futuristic as motor control, which has been around for some time, from Jose Delgado’s bull experiment to a veteran’s prosthetic that is capable of maintaining sensory perception while being brain-controlled. Furthermore, it is not limited to motor control (as in self, or biological body parts, most common in limbs) but extends to prosthetics too. And as a new type of prosthetic emerges – not only controlled by the brain but actually sending data back to the brain, developed by Hugh Herr for the Center for Extreme Bionics at the MIT Media Lab – a new horizon opens up.

The external data changes the internal circuitry, celebrating a bidirectional data highway that comes as a step two towards a more diverse system not limited to the motor aspects but understanding more complex areas such as space perception.

As worlds become nested into bits, and bits into pocket-sized devices, designed environments expand from a mere environment to a more filter-like nature, augmenting the actual with real virtuality. Upon the strata of what makes up the actual, or the real, sits a set of layers, some of which we experience, and a lot that we don’t. In alignment with that, it is not only that objects do not unfold unevenly and partially; also, what we take out of the object does not necessarily belong to the object in the first place. It is an ideological projection of our own.

Simultaneously, whatever we additionally uncover, through technology (where the senses are taken as ground zero) is potentially as real as anything else. It enters the frame of that which we interact with, affectively and effectively. In a parallel dimension, filters tend to reinterpret what actually is and manipulate its pixels to present it in a new form, a new image perhaps. Is the filtered image a new image, or is it still the same image? The question ceases to be relevant at the functional front, as the image has been transformed, stored as a new image and has a new set of relations with the world and the user. Likewise, designed realities that acquire an architectural relevance could be attained not only by designing physical or virtual environments, but by systematically manipulating the real by changing its affective impact and maintaining at a margin its effective role. What this implies then is a set of diverse possibilities, nested one in another, to produce a new type of space-on-demand kind of experiential domain of choices to pick from or have imposed.

UC Berkeley, StimDust, Photo credit: Rikky Muller.

This digital shelf of infinite possible worlds, each existing on their own, in a cloud space lacks an interface – otherwise currently referred to as virtual/augmented/mixed reality hardware. What these sets of hardware (and software) act upon is human visual perception. At best, there are the ones that trick our sensory perception most effectively. This sort of interface, on the other hand, is a medium to be eliminated in favor of a more direct communication front. I propose here the idea of the minimal interface interaction protocol, subsequently arriving at a direct communication between what is and our perception.

At first, it sounds technically impossible to eliminate an interface when dealing with two objects. Object A interacts with Object B, with Object C as a mediator – an interface. When Object C is eliminated, and if an interaction is possible, both Objects A and B break down into an object and an interface simultaneously, either partially or as a whole. It implies passing the agency into a more indigenous part of the system, rather than importing external third-party objects.

Downloading worlds then becomes a new means of direct interaction between the bits that make up the virtual and the cells and atoms that make up the human subject. When not limited to humans, however, and as sensate artificially intelligent mineral assemblages require a certain context to perform in an altered manner, hacking the machine system would be enough to trick that machine to come forth in a different mode, and by that manipulating the brain. Neurophysiological data acquired while these downloaded worlds are examined by the user is the food for training the algorithms here.

Feedback here is more of yield rather than a response. It is an asset on its own; it is the meat on which the ecology survives. No longer exclusive to the user nor to the environment, a complex array of feedback, together, formulates a new meaning map on which the system thrives. The users’ response is distilled down to data that feeds the environments’ behavioral decision grounds, and vice versa. Consequently, feedback becomes a new reflection. Feedback is the other’s image.

Entangled in this complex space, that ultra-dependence recreates the user.

Monetizing the neural landscape is a mighty new driver for this ecology. Within the landscape of the marketplace, sensing opens the doors to a new type of product by monetizing private personal data about people’s lives through a sensate artificial intelligence force that renders out a new product based on patterns, statistics, and complex algorithms that drives that entity. Our future behaviors are the product, and it is already on the market.